Applied Case: The Therac-25

A medical accelerator is not a neutral machine that switches teams to dangerous at the moment it malfunctions.

While on the topic of automated harms:

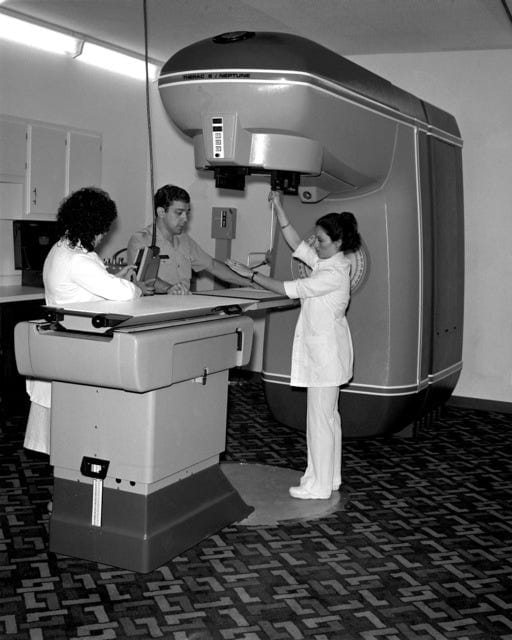

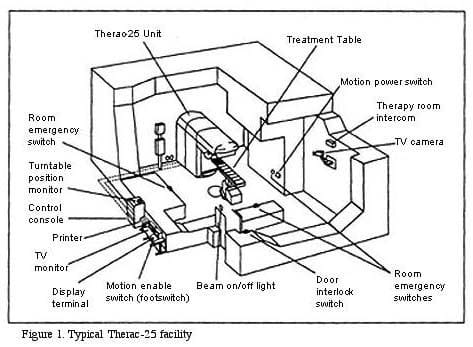

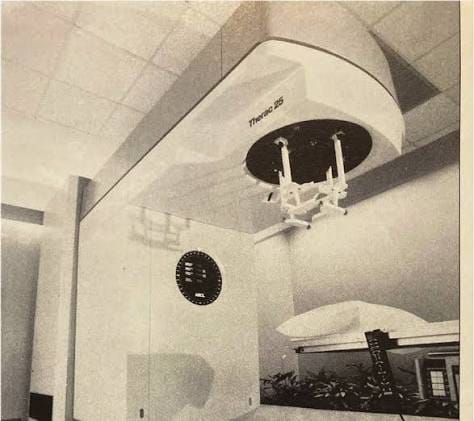

The Therac-25 was a computerized radiation therapy machine. It was used to treat cancer.

This case does not begin with software, computers, or any engineering failure. The core of this topic is human patients whose reachable futures depended on an automated machine doing controlled damage to their fields correctly.

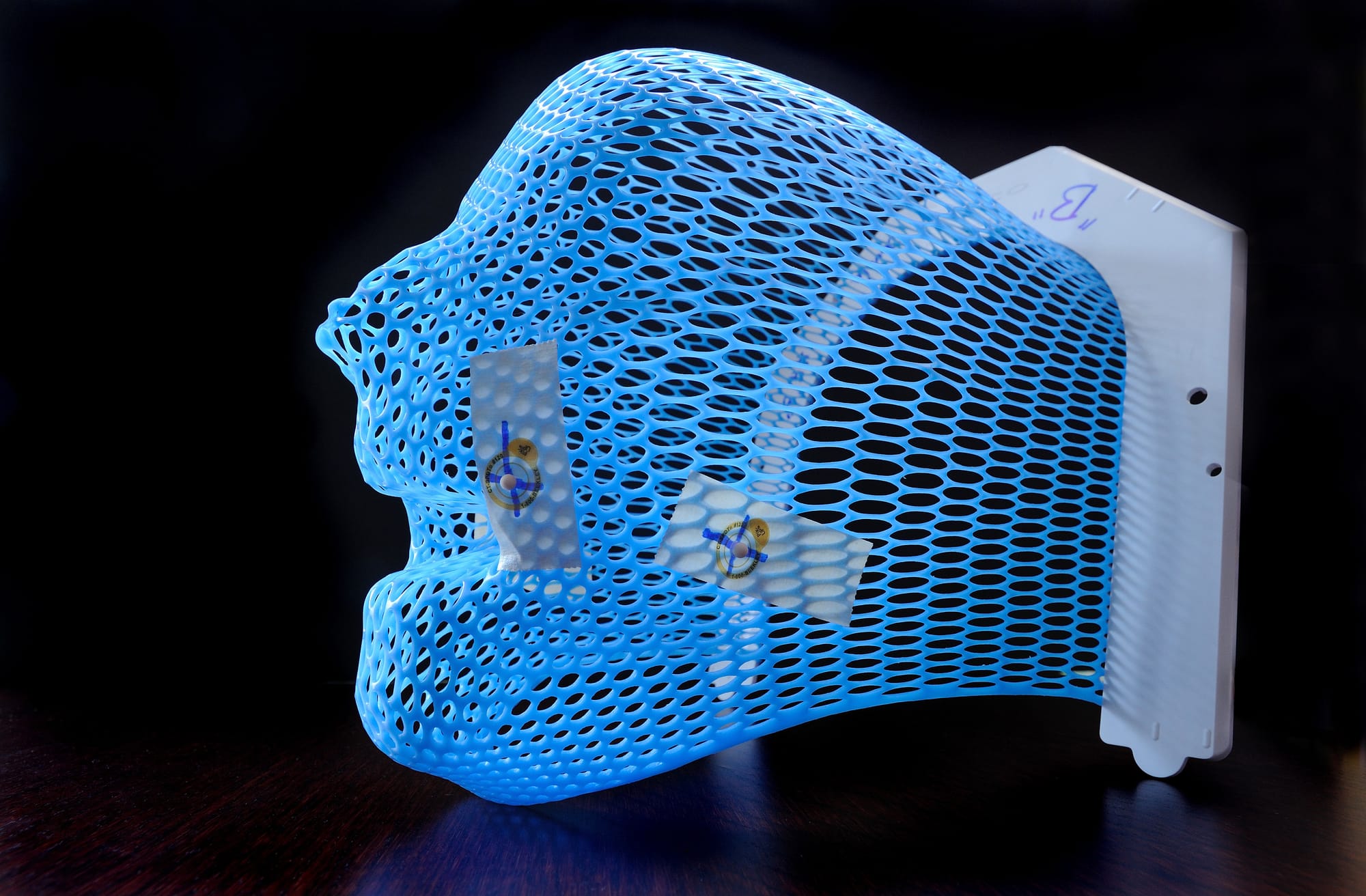

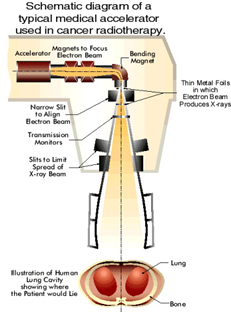

Radiation therapy, as a process, is already morally unusual. Here, we are using a dangerous, harmful force for a healing purpose. The point of this therapy is to damage cancer cells while preserving as much healthy tissue and future life in the broader human as possible. A radiation therapy machine is clearly never harmless. It is safe only if the harmful power is constrained, measured, aimed, limited, checked, and stopped when the field begins to leave the intended path.

So, a medical accelerator is not a neutral machine that switches teams to dangerous at the moment it malfunctions. This is a dangerous machine we have made medically usable by encircling it in a field of constraints.

Between 1985 and 1987, those constraints failed.

The Therac-25 machine massively overdosed patients. Some were killed, others were severely injured. The technical details do matter, but they are not the subject of this article. The Therac-25 should not be reduced to “a software bug killed people, let's debug it,” because that description is too small and the mindset too narrow.

The deeper moral problem was a damaged safety field. The machine had power over the continuance of the field. The patients bore most of that vulnerability. The operators, meanwhile, had limited perception. The interface used gave them inadequate warnings. The manufacturer had misplaced their confidence.

And the hospitals depended on this machine. Reports of injury were difficult to interpret and slow to alter the whole system. Regulators only entered after the harm had already occurred.

The Medical Field.

A patient receiving radiation therapy is in an extremely asymmetrical position.

I do not think, from what I can gather, that the patient is permitted to inspect the machine. The patient cannot verify the beam, or independently know whether the delivered dose matches their prescribed dose. The patient cannot see the invisible transition between therapy and injury. The patient’s future entirely depends on a chain of trust passing through a system of physicians, physicists, operators, engineers, software, hardware, safety procedures, maintenance records, incident reports, and institutional honesty.

That dependence is not automatically wrong.

Medicine always involves some dependence. A patient undergoing surgery, chemotherapy, anesthesia, imaging, or radiation therapy cannot personally validate every technical layer of their own procedure.

That dependence still increases moral burden.

If a patient must entrust their body to a system they cannot themselves inspect, then the system has to preserve paths of detection, intervention, correction, and accountability. It must not require the patient to prove the machine harmed them after the machine has already made getting that proof very difficult.

In the Therac-25 accidents, patient reports were part of the field. Some patients reported sensations of burning or shock. Those reports should have been treated as contact with harm: information from the exposed locus that something had gone wrong and futures may be closing.

Instead, the broader system had difficulty absorbing that information, and many futures were, in fact, closed.

The patient’s body had registered the transition well before the institution came up with a stable explanation for it. Because the machine had appeared calibrated, because the interface did not clearly disclose the overdose, because the event could not easily be reproduced, and because the existing model of the machine’s safety did not make the accident legible, the warning path from injury to correction was greatly narrowed.

The Machine Field.

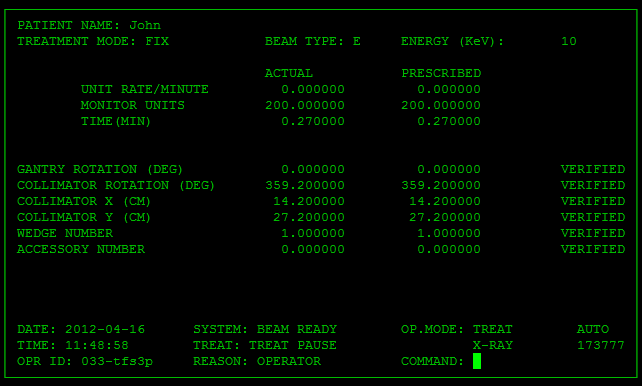

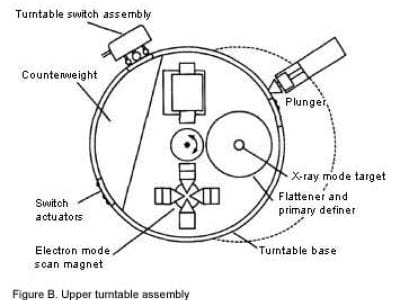

The Therac-25 was a machine designed to deliver powerful radiation in more than one treatment mode.

The machine could provide different forms of therapy depending on the clinical need. In its intended use, that was a big opening: one machine could now serve more patients, offer more treatment flexibility, and make advanced therapy more available.

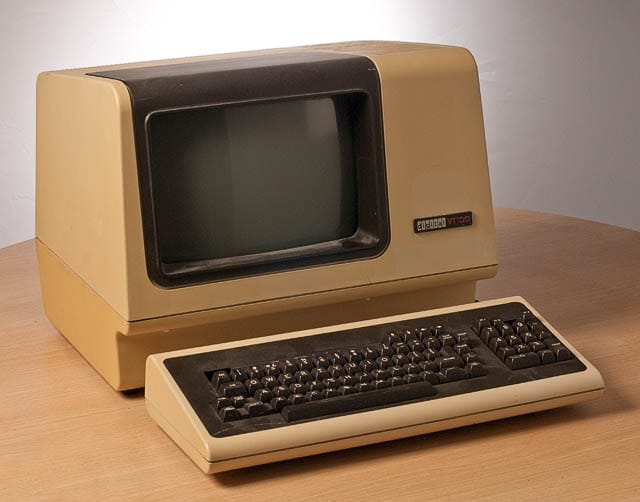

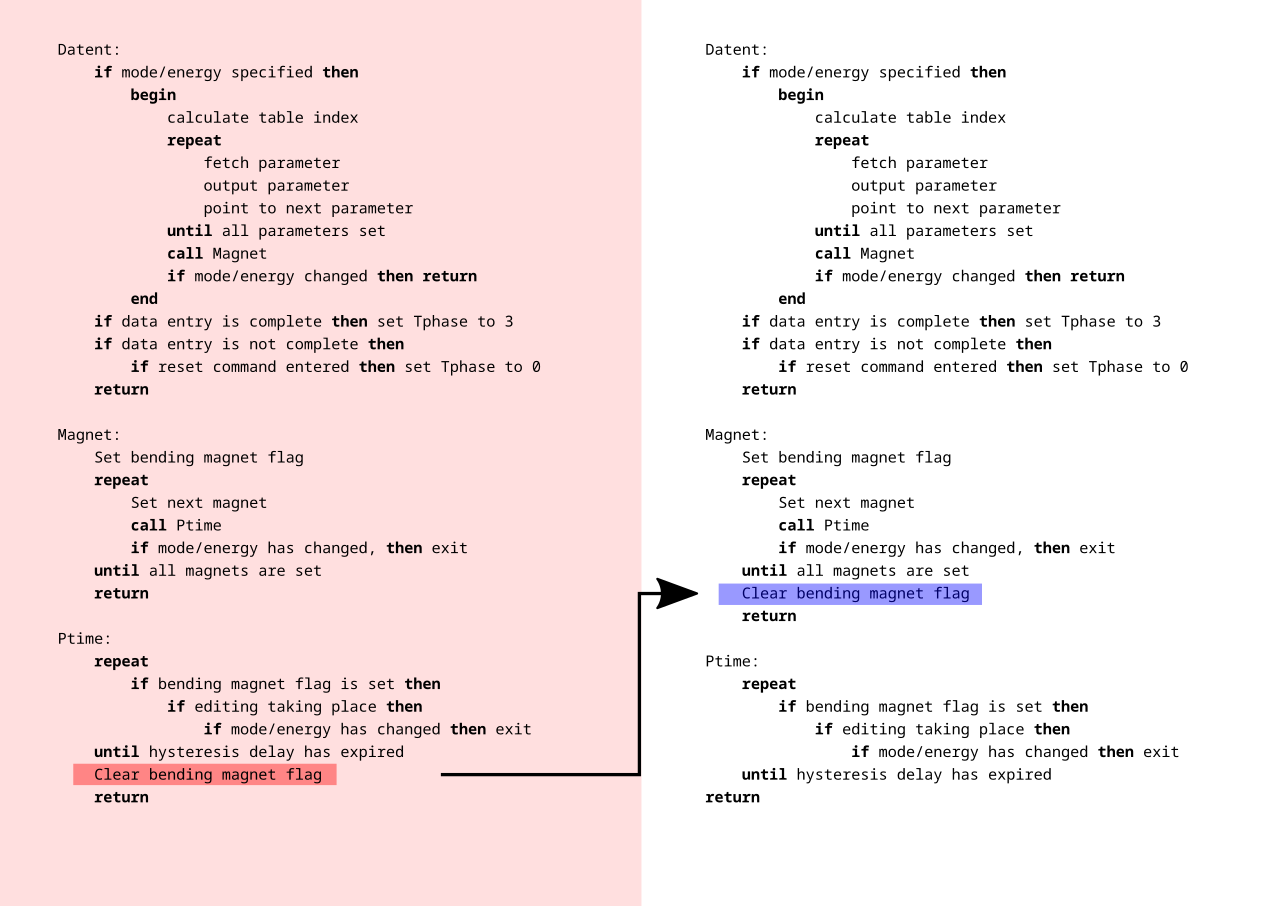

The problem is that the machine’s safety depended heavily on computerized control systems from the 1980s. Earlier machines had used more independent hardware safety mechanisms and interlocks. The Therac-25 had relied more on software to manage safety-critical conditions.

This is not automatically the wrong choice at all. Software can certainly improve safety, especially today. Computer control can reduce some errors, add checks, guide operators, enforce procedures, and just generally make complex systems more usable, and futures more reachable.

But when software replaces independent constraints, the moral burden now changes. The system becomes less safe if the software is treated as a magical authority instead of the one, highly fallible layer inside a larger safety field that it is. A software-controlled medical machine must still always preserve independent ways for reality to object to the procedure.

A safety-critical system has many paths by which harm can be stopped. The machine detects the unsafe state, the hardware prevents the dangerous configuration, the software refuses the command, the interface actually tells the operator clearly what happened, the operator can stop the treatment, the patient can communicate distress and be heard, the institution can manage to connect one incident to another very similar incident, the manufacturer can receive reports and act on them, the regulator can intervene, future patients can be protected before the recurrence of harm.

With Therac-25, far too many of these paths were made unnecessarily narrow all at once.

The Operator Field.

The operator in a safety-critical system is often imagined by us as the human backstop.

A human operator can only actually serve as that backstop if the surrounding field gives them enough perception, authority, time, and truthful information to act. If the interface instead hides the danger, or warning messages are unclear, or if errors are common enough to become normal, or if the machine just reports no dose or an impossible state, and if production pressure or treatment schedules continue anyway, then the operator is not actually some free-floating moral agent with full access to the truth and full capability to intervene to stop harm.

The operator of the machine is one locus inside of the system.

“Operator error” can become a way of concealing poor field design. Sometimes an operator truly does make a negligent choice, but in many technical systems, what appears afterward as operator error is actually the point where a damaged field became materially visible.

The Therac-25 operators were not standing outside the machine on the transcendant plane with godlike knowledge. These people were interacting with an interface that mediated what the machine was doing and what they knew about what the machine was doing. Their perception of the patient, the treatment, and the error condition was filtered through earlier design decisions.

If the machine gives unclear messages, the operator’s future obviously narrows.

If the machine frequently produces non-serious malfunctions, the operator’s sensitivity to warnings narrows.

If the system allows a dangerous state while presenting the treatment as paused, corrected, or not delivered, the operator’s ability to actually care for the patient narrows.

The operator can still be causally involved, and make mistakes. But Modal Path Ethics does not stop asking questions at causal involvement. It asks what futures were actually reachable for each locus inside the field.

Any safety system that requires heroic interpretation from its ordinary operators is already deeply morally defective.

The Manufacturer Field.

The manufacturer occupies a very different position.

The manufacturer designs the machine, knows the architecture, controls the documentation, receives the reports, communicates with the users, revises the safety procedures, and can generally speaking alter the product. That gives it a lot more leverage, which always comes with responsibility.

The moral problem is here not that the Therac-25 had defects, and it should not have. Complex systems can and typically will have defects. The deeper issue is how the manufacturer’s confidence, procedures, and responses shaped the field after the first harms had already appeared.

When a safety-critical system produces a catastrophic event that does not fit the manufacturer’s model, the burden of proof should shift immediately.

The first question should never be: can we reproduce the accident beyond doubt before we restrict the machine?

The question should always be: can we justify continued operation of this system while the accident remains unexplained to us?

If a machine can absolutely deliver lethal or devastating harm, then an unexplained, serious injury is not just some anomaly in the field. This is very serious evidence that the field contains an previously-untracked path to total catastrophe. Until that path is now understood and blocked, continued operation transfers unacceptable risk to future patients.

The future patients do not consent to being the experiment that clarifies the field to you.

This is where institutional confidence becomes very dangerous. A manufacturer may sincerely believe its system is safe. It may have a library of calculations, tests, assumptions, analyses, and prior experience. Those all still matter.

But when reality presents you with contradiction, that contradiction must outrank your model.

The Regulatory Field.

Regulators enter late because private confidence is not enough.

A medical device that can kill patients through invisible technical failure cannot be governed only by manufacturer assurances and local clinical trust. The public field clearly needs independent oversight because the exposed loci are too vulnerable in the situation and the information asymmetry at play is too severe.

Regulation is not therefore inherently good.

Regulation can certainly be slow, captured, underinformed, formalistic, or distortive, but in a field like this, the absence of strong oversight narrows the futures of patients who cannot meaningfully protect themselves.

The regulator’s moral role is to preserve paths that private actors may fail to preserve on their own. These include reporting paths, investigation paths, recall paths, disclosure paths, design standards, independent review, public learning, and future prevention of harm.

The Therac-25 case shows us clearly why these paths matter. When a safety-critical machine first fails, the harm is not limited to the first patient injured. Every single delayed report, unclear notice, incomplete fix, or unshared lesson leaves later patients stuck inside a field where the same closure remains reachable to them. The first patient had already been in that field before the harm became material.

Regulation should be seen as insitutional memory of the moral architecture, not understood as simple bureaucracy floating above the real event.

This is More Than Software.

The Therac-25 is often narrativized as a software disaster.

Software was definitely central. The machine relied on software in safety-critical ways, and the accidents are still taught because they do show that software errors can kill humans.

But the moral field doesn't end at software. A software bug is a local mechanism. It is not the whole harm.

The structural harm of Therac-25 required a field in which all the following obtain:

A dangerous machine could enter clinical use, while software could become a single point of catastrophic failure, while hardware interlocks could be reduced or removed, while operators could receive inadequate information, while patients’ reports could fail to produce immediate system-wide correction, while the manufacturer could remain overconfident, while hospitals could continue use under uncertainty, while regulators could arrive well after repeated harm, and while future patients could remain exposed.

This is a structural disaster that cannot be compressed into "the software was buggy, computers seem dangerous."

Modern systems are, obviously, increasingly software-mediated. Human medicine, transportation, finance, energy, education, law enforcement, employment, communication, and warfare all use systems whose internal operation is difficult or sometimes impossible for ordinary participants to inspect.

Punching every failure ticket as a "bug" means allowing the field that actually produced the harm to continue reproducing unchecked.

Controlled Harm.

Radiation therapy makes the Therac-25 case even sharper because the treatment itself involves harm.

The purpose of this machine is to damage human tissue. The goal is to damage a targeted threat-region in order to preserve the patient’s wider future. The therapy is clearly justified as Better, because the local damage is constrained and directed toward reopening or preserving a larger path of life.

The presence of cancer already narrows the patient’s future. Treatment may also impose pain, risk, fear, radiation exposure, surgery, drug toxicity, and lasting side effects. The morally relevant question is always whether the treatment path preserves more weighted future-space than the disease path and its alternatives.

In successful radiation therapy, harm is constrained into the service of a less-closing path. In the Therac-25 accidents, this structure broke down. The same power that was supposed to preserve the patient’s future instead destroyed or severely narrowed it.

Safety constraints are therefore what makes this machine morally permissible at all, not external to the moral status of the machine. A radiation machine without adequate safety architecture is not a healing instrument with some risk attached. The Therac-25 was an uncontrolled harm path placed inside a hospital.

The Warning Paths.

A dangerous system is morally safer when warnings can travel quickly from the harmed locus to the loci capable of repair.

In the Therac-25 field, the warning path required information to travel from the patient’s body to the patient’s report to the operator to the clinic, then to the physicist or physician, then to the manufacturer, then to other users and to regulators, and then to design changes, then to future patients.

Every layer of that absurd pipeline could preserve or thicken resistance.

If the patient’s report is dismissed, the path narrows. If the operator has no clear machine data, the path narrows. If the clinic treats the event as isolated, the path narrows. If the manufacturer cannot reproduce the failure and therefore discounts it, the path narrows. If users are not promptly warned, the path narrows. If regulators lack timely information, the path narrows.

When enough warning paths narrow at once, like they obviously would in the system I just described, that system becomes morally worse even before the next accident. The harm is already in the field because the field cannot manage to reliably learn from its own contact with reality.

The Illusion of Normalcy.

Safety-critical systems most often appear to be safest between accidents. This seems like an obvious claim.

A machine can function normally many times and still contain an absolutely catastrophic reachable path. A hospital can treat many patients successfully with it and still be exposing the next one to hidden risk. A manufacturer can point to its ordinary performance and still be very wrong about the field.

The Therac-25 field was dangerous because the ordinary successful treatments helped sustain confidence in the system. The machine could work, and often did. That made the equally real failures harder to interpret. If the machine had failed constantly and visibly, the field might have been corrected sooner. Instead, the danger appeared intermittently, only under specific conditions, and still inside a system whose general usefulness was very real. Normal operation is evidence, not proof.

Many harmful systems are not harmful because they fail all the time, or even often. They are more often harmful because they succeed often enough to preserve trust while also failing catastrophically under conditions the field refuses to understand. The system's success becomes a part of the distortion.

Therac-25 as an Extant Locus.

The Therac-25 itself can also be analyzed as an extant locus, as it is a bounded technical system with inputs, outputs, states, constraints, dependencies, failure modes, and reachable transitions.

This was not a passive object. The machine actively mediated the futures of its patients and operators. It accepted instructions, changed modes, reported conditions, delivered radiation, stopped treatment, displayed messages, and shaped what human participants could know or do next.

In much the same the way that Sydney had interactional agency, Therac-25 had no moral agency but did still have agency. The datacenter had infrastructural agency through power, water, heat, and computation. The Therac-25 had clinical-operational agency. It could never intend, but it could choose and could still alter the field.

A technical system does not need consciousness to be morally relevant, just the field effect.

The Blame Game.

It would be easy and probably entertaining to make this article a hunt for the guilty party.

There are real responsibilities here: manufacturer responsibility, design responsibility, institutional responsibility, regulatory responsibility, clinical responsibility. Those should not be ignored, but blame doesn't actually explain the moral structure to us.

Blame is secondary to the common field question: what happened to reachable futures, and how were paths of repair opened or closed?

Well, the patients involved in accidents suffered the most direct and obvious contraction.

The operators were placed in a narrowed perception-and-action field.

The hospitals depended on a machine whose hidden states they could not fully inspect.

The manufacturer held central knowledge and design power.

The regulators were responsible for preserving the public safety paths.

Future patients were exposed by the systemic delays in recognition and correction.

And the machine itself was a non-agentic technical locus whose design permitted catastrophic transitions.

If the analysis collapses into “the programmer caused it,” or “the operator caused it,” or “the company caused it,” the field is still under-described and has now disappeared to our minds.

What Should Have Happened.

A safety-critical system should obviously not be allowed to continue normal operation after credible evidence of catastrophic hidden failure unless the field can show that the dangerous path has been identified, blocked, and communicated.

This is especially true where patients, passengers, workers, or the public cannot inspect the risk for themselves.

In the Therac-25 case, the correct moral response to unexplained severe injury should have been immediate field-preserving action:

Stop or sharply restrict use, warn all users, preserve and share incident data, treat patient reports as evidence, give operators clear authority to halt, investigate the system as a whole not just some isolated components, add independent safeguards, make failure modes visible, involve regulators quickly, do not resume ordinary use until safe operation can be proven and justified.

This is not a description of hindsight perfectionism. There is no reason why any of that should not have been the reasonable path to follow. This is the minimum discipline required when one locus controls a machine capable of destroying another locus’s future in seconds.