Applied Case: The Datacenter

The important question is not whether or not the building is, in fact, digital infrastructure.

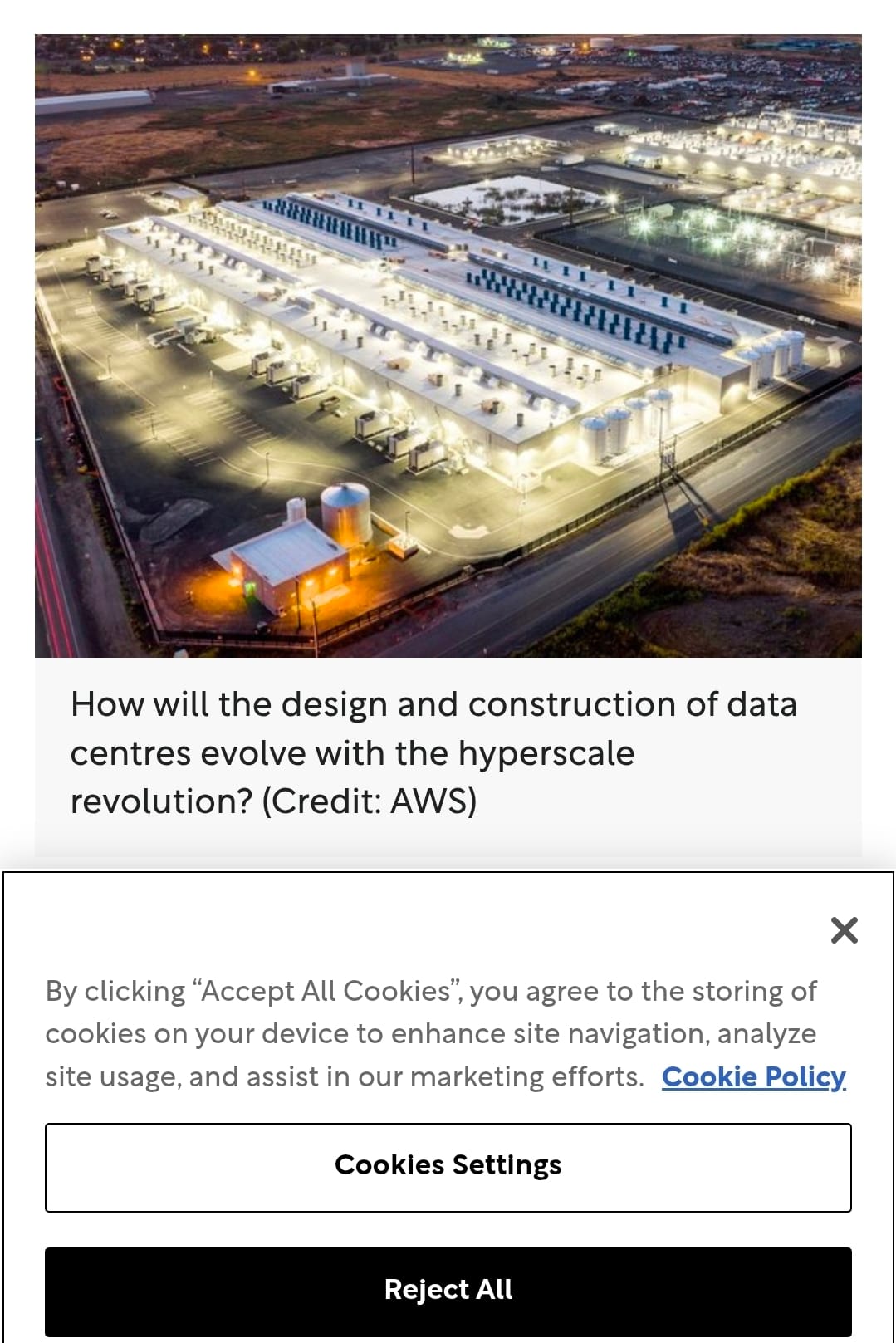

A datacenter is a building, or group of buildings, filled with computer servers and the equipment needed to keep those servers powered, cooled, connected, and running.

For a long time, this definition was enough for most purposes. A datacenter was part of the background machinery of the internet. It held websites, files, business software, photos, payment systems, search indexes, cloud applications, and all the other digital activity people had learned to treat as if it happened somewhere light and abstract.

The language we use for the internet helped hide the building that was always there.

“The cloud” is a very successful phrase because it makes the whole internet thing sound so weightless. A cloud does not need a substation, or ask a utility for hundreds of megawatts. A cloud does not pull water into a cooling system. A cloud does not require transmission lines, backup generators, land, chips, concrete, steel, tax deals, security fences, and diesel permits.

Clouds just float on by, with the breeze, but The Cloud was never a cloud.

It has always been many places: large physical facilities where electricity, water, hardware, labor, land, capital, and public infrastructure are cleverly converted into computation.

That computation can do useful things. It can store medical records, or route emergency information. It can support our scientific research or translate our languages. It can let a small developer use infrastructure they could never possibly build alone. It can make education, analysis, design, communication, and coordination generally more reachable.

To put it in Modal Path Ethics terms: computation can lower resistance.

This is why the datacenter should not be treated as a simple villain for any of our narratives, even though it has changed.

The older cloud always had a body, but the AI cloud has a larger and more demanding one. The issue is not just that there are now more datacenters popping up.

The issue is that the specific kind of datacenter being demanded by current artificial intelligence is different from what used to exist. They are denser, hotter, more power-hungry, more cooling-intensive, more urgent, more geographically strategic, and more likely to collide with local grids, watersheds, communities, and climate goals.

The moral problem begins here, not with the existence of a location for computation. We are dealing with the transformation of computation into a new infrastructure demand that is also being built faster than the surrounding field can truthfully absorb.

The Old Story.

Before the current AI buildout, the public story about datacenters was much more defensible.

Digital services were expanding, but datacenter efficiency had improved sharply. Workloads moved from smaller, less efficient enterprise server rooms into larger cloud and colocation facilities. Cooling had improved, server utilization had improved, power management had improved.

Hardware, in general, had became more efficient. Facilities had learned to do far more computation per unit of electricity.

Datacenters were still never harmless. However, the field always had a clean efficiency story.

The digital world was growing a lot, but some of its resource pressure was being offset by consolidation and better design. The industry could say, with some justification, that larger facilities were often more efficient than the scattered server closets and older enterprise systems they replaced.

This was the earlier deal, and it made so much sense, no one even noticed it.

There will be more digital activity, but better efficiency. More cloud dependence, but less waste per unit of work. More computation and resource allocation, but not yet the same kind of load shock we are now seeing, now that AI has altered the arrangement.

What AI Changed.

Artificial intelligence did not just add another software category for existing datacenters to serve.

The advent of AI changed what the datacenter had to become. Modern AI workloads rely heavily on accelerated servers: systems built around GPUs and other specialized chips designed to perform the enormous parallel computation required for training and running large models.

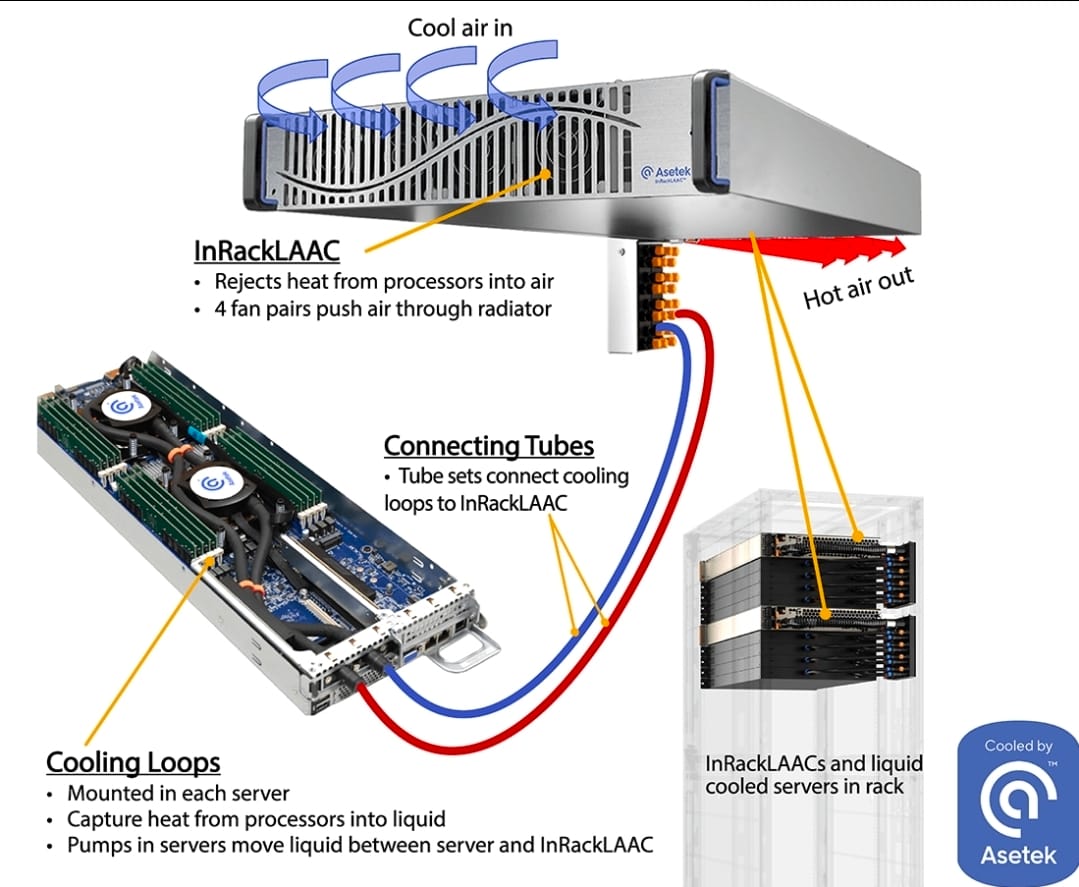

These systems are not just ordinary servers doing ordinary cloud work at a larger scale. Accelerated servers draw more power, produce more heat, require dense networking, and often need very different cooling and electrical design.

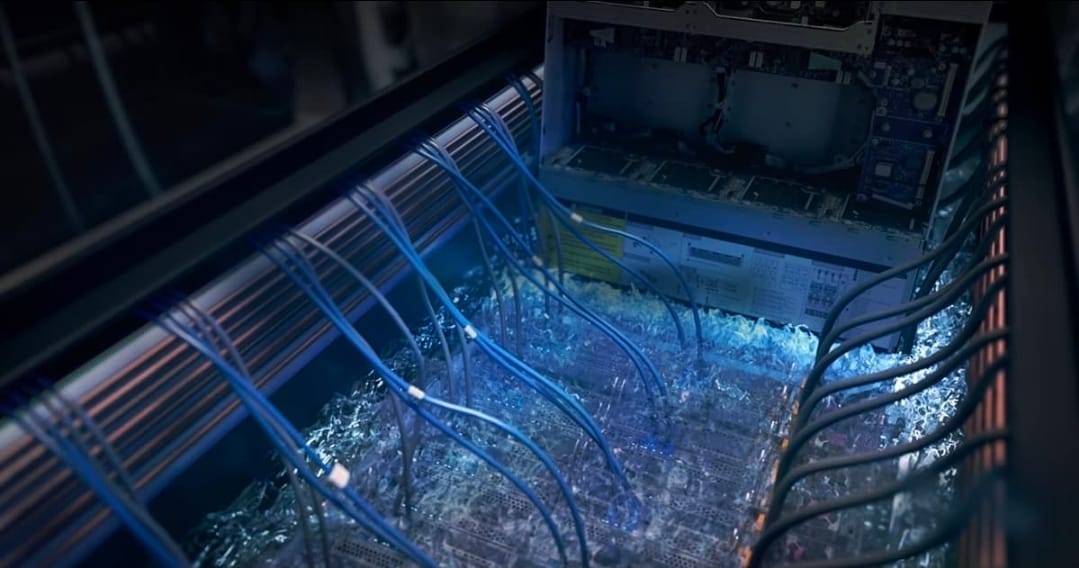

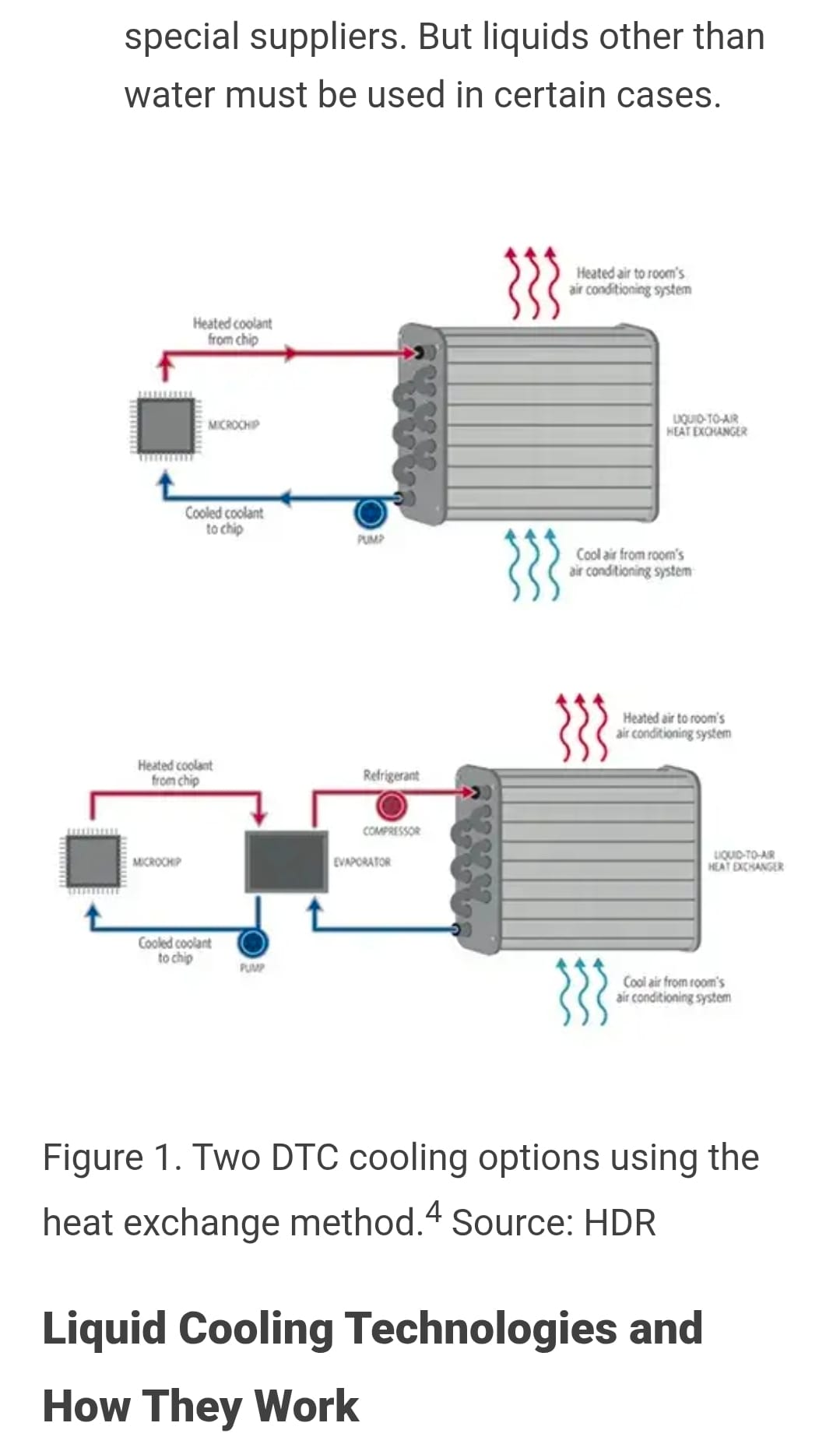

A traditional datacenter rack might have been designed around relatively modest power density. This is no longer a realistic goal. AI racks can demand far more. Once power density rises, cooling now becomes harder and harder. Once cooling becomes hard enough, air cooling may no longer be enough to keep the server running. Once air cooling is not enough, the facility now moves toward liquid cooling, direct-to-chip cooling, or other more intensive thermal systems that can keep their servers operating. Once those systems become the new normal, the entire facility begins to look less like an office building full of servers and more like an actual industrial plant organized around heat removal.

Everything inside has changed.

This is why “AI is just software” is not a serious description of the field as it obtains. AI may appear to the user as text, image, voice, code, search, or recommendation, but at the infrastructure level, AI is electricity moving through specialized chips and becoming excessive heat.

The Datacenter as an Extant Locus.

A datacenter is an extant locus.

There is no difficult metaphysical puzzle to be solved here. It is a bounded, active region of reality with inputs, outputs, dependencies, vulnerabilities, maintenance cycles, failure modes, and future-structure.

A datacenter takes in electricity. It may take in water. It takes in hardware, land, labor, capital, rare minerals, network access, and public permission.

In exchange, it gives out computation, heat, noise, economic activity, tax revenue, emissions depending on the power mix, waste hardware, and institutional dependence.

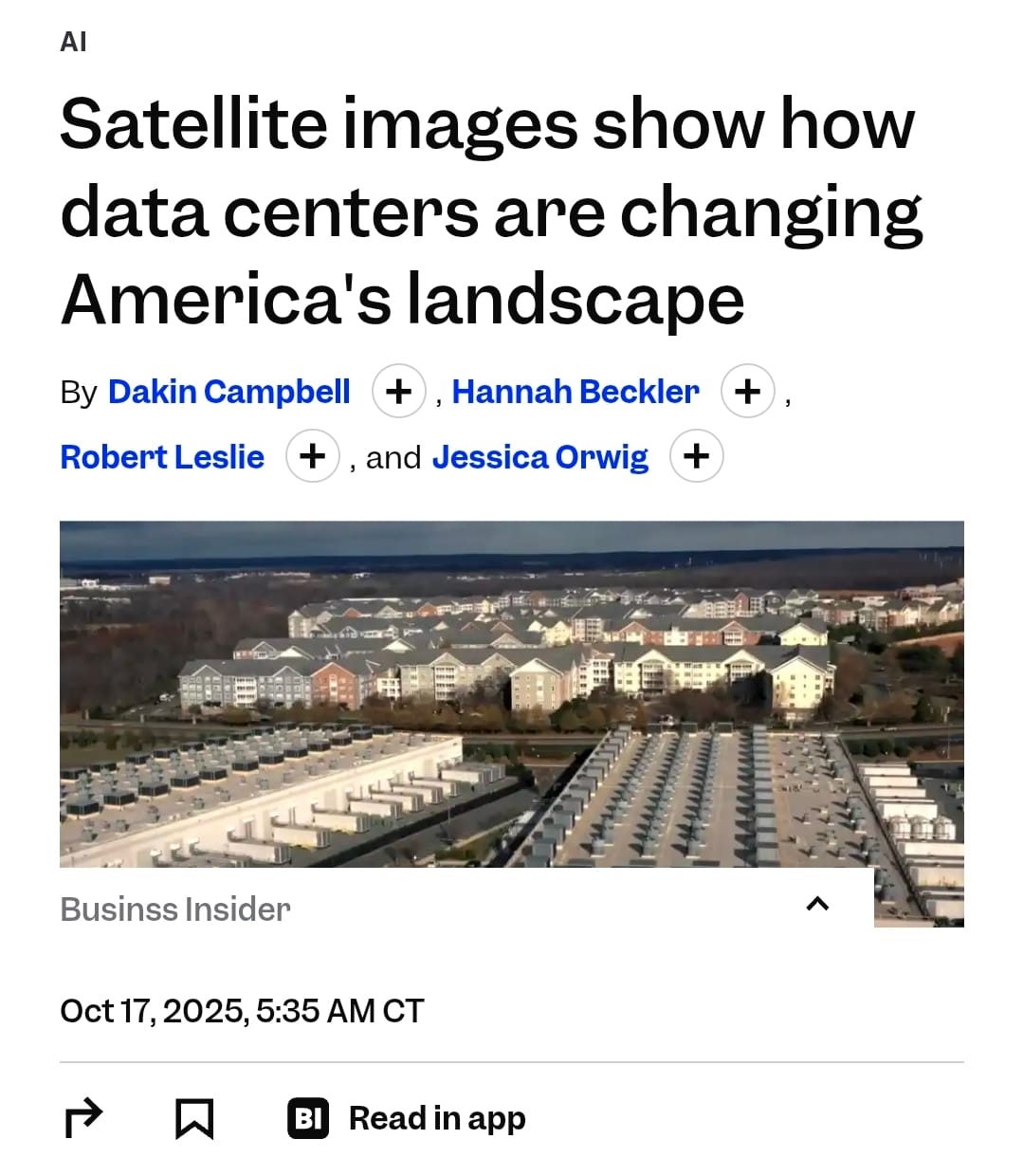

This locus exists inside larger loci. A datacenter is never just “over there.”

It is always over there in relation to something else: connected to a substation, a power plant, a transmission plan, a utility rate structure, a cooling method, a permitting process, a tax arrangement, a public narrative, and a set of workloads someone considers valuable enough to run.

Under Modal Path Ethics, the question is not whether datacenters consume too many resources. Every extant process consumes. Life consumes. Civilization consumes. The question is what this consumption opens, what it closes, who receives the opening, who receives the burden, and whether the same benefit could be reached through a less-closing path.

That is where the current AI datacenter buildout becomes morally unstable.

What Datacenters Open.

Datacenters open real futures.

They make large-scale computation reachable. A society without strong digital infrastructure loses access to many forms of coordination, research, communication, storage, and analysis. Medical systems, universities, emergency services, businesses, governments, creators, scientists, students, and ordinary people all depend on computation in our world.

It can lower the cost of technical assistance.

It can help people write, code, translate, study, summarize, search, design, test, and prototype.

It can help researchers analyze complex data.

It can support accessibility tools.

It can assist small teams that would otherwise be locked out of expensive expertise.

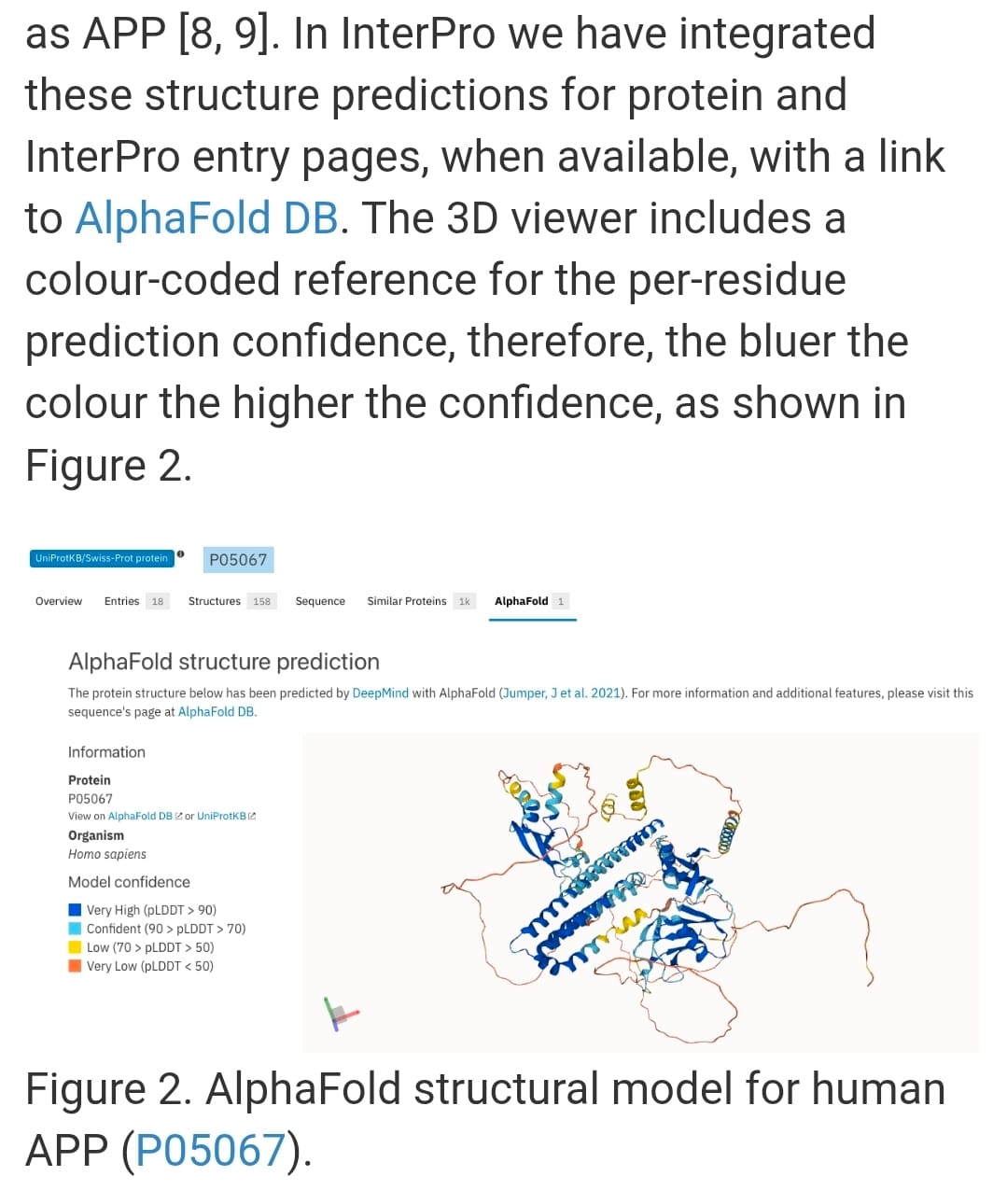

It can help model weather, proteins, materials, logistics, infrastructure, and disease.

It can let an individual reach work that previously required an entire institution.

The fact that AI has real costs does not make every use frivolous.

A model used to accelerate medical research is not morally identical to a model used to generate spam.

A datacenter supporting accessibility tools is not morally identical to supporting predatory engagement systems.

A compute cluster used for climate modeling is not morally identical to one used to automate scams or flood the web with synthetic filler.

The moral status of a datacenter ultimately depends partly on what its computation is for.

What Datacenters Close and Burden.

Datacenters also close and burden futures.

They require electricity. That electricity always has to come from somewhere.

It may come from clean power, fossil power, nuclear power, hydroelectric power, stored power, or some arcane mixture hidden inside contracts and accounting, but even when a company buys renewable energy, the physical grid still has to balance the real demand in real time. The electrons do not actually care about the press release we write.

A datacenter requires grid capacity. A large datacenter can demand as much power as an entire small city or industrial facility. When many are built in the same region, they can force utilities and grid planners to find generation, substations, transmission, and reliability solutions faster than public infrastructure normally can move.

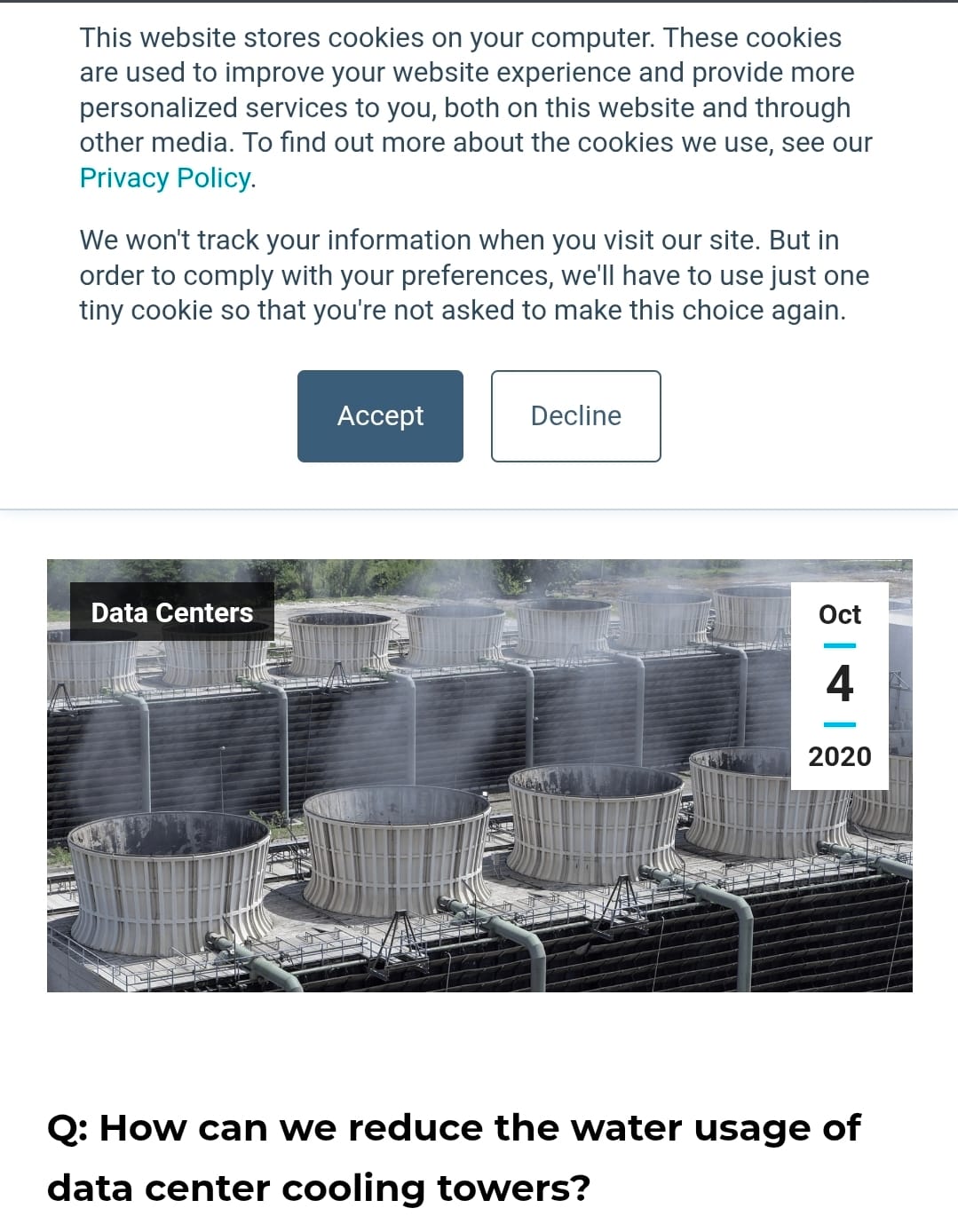

A datacenter may require water, especially if serving AI. Some cooling systems consume water directly. Other facilities use less water on site but more electricity, which may shift the water burden upstream to the power system.

A facility can look water-efficient at the fence line while still drawing on excessive water through its energy supply.

A datacenter also requires land, construction, backup systems. They create a lot of noise, heat, traffic, security zones, and general industrial presence.

They also produce hardware waste as chips age quickly and AI accelerators are replaced. They depend on supply chains that carry their own extraction, labor, geopolitical, and environmental burdens.

Datacenters also require public tolerance.

That tolerance is often purchased with jobs, tax revenue, development promises, and the language of innovation.

Sometimes those benefits are real and sometimes they are smaller than advertised. Sometimes the local community receives relatively few jobs after construction while inheriting a permanent infrastructure burden.

This is burden transfer in many directions.

A company receives compute. Users receive services. Investors receive growth. A local grid receives load. A watershed receives cooling demand. A rate base may receive infrastructure costs. A community receives land use and backup generators. A climate target receives another complication. An information field receives whatever the compute is used to produce.

So, the AI datacenter does not only consume resources, but primarily relocates reachability through burden transfer.

Why The Field Became Unstable.

The current instability we have all noticed comes from first from a timing mismatch.

Datacenters can be planned and built much more quickly when compared with the energy systems that must actually support them. AI demand also moves faster than public infrastructure, utility planning, transmission buildout, water planning, and democratic oversight does. A model race heating up can change demand forecasts in months. A grid simply does not become ready in months to match our narratives. It doesn't work like that.

This creates pressure to solve the problem very badly.

One bad solution is to build first and just push the burden around later.

Another is to chase whatever power is fastest, whether or not it supports the broader transition away from harmful energy systems.

Another is to treat local communities like they are obstacles to your infrastructure rather than as actual loci inside the field.

Another is to hide behind market instruments, offsets, renewable certificates, or selective accounting while the physical grid still carries the same stress.

Another is to make every workload sound socially super-important because the building that runs that cancer model can also run an advertising model, a surveillance model, a spam system, a recommendation engine, or a vanity chatbot no one ever needed.

So the instability here is moral, not just technical.

The AI datacenter buildout is happening inside an already damaged field with aging grids, climate constraints, water stress, fragile public trust, concentrated corporate power, weak transparency, and communities that have often seen infrastructure promises become local burden far, far too often.

Under such conditions, the pure Good may not be immediately available. Computation is already deeply embedded in civilization. AI is already being used widely. The burden is already being transferred. The question is not how to remain untouched by the evils of the system. That option is gone for most of us.

This where we turn to the category of Better.

Better.

The datacenter is a very clean example of Better because every serious path contains cost.

If we stop building all new compute infrastructure, some harms may be avoided, but many very real openings also close. Medical research, accessibility tools, education, scientific modeling, cybersecurity, small-team capability, and useful automation may become less reachable or foreclose entirely.

Compute scarcity can also concentrate power in the hands of those who already own the existing infrastructure.

Conversely, if we build without restraint, we risk transferring massive burdens to grids, watersheds, workers, communities, ratepayers, climate systems, and information environments. We may therefore widen the future for a small number of companies and individuals while narrowing it for everyone else. That is not a healthy field.

Therefore neither path is pure, and the Better path lies in disciplined compute.

That means building only where the downstream opening justifies the field cost, and building in the least-closing available way.

This requires a different moral vocabulary and imagination than “innovation good” or “AI bad.” Both slogans are obviously too coarse.

The moral question is not whether a datacenter exists or no, but whether this datacenter, in this location, powered this way, cooled this way, governed this way, serving these workloads, with these burdens and these benefits, preserves more weighted reachable future-space than its alternatives.

This is where the case becomes visible.

Weighting the Workload.

A datacenter is not morally separable from its workload.

This point should be obvious, but the public conversation often treats compute as a neutral abstraction.

It is not. Computation is directed. It always does something.

A datacenter used for public health modeling, scientific research, resilient communications, accessibility tools, education, and genuine productivity has one moral profile.

A datacenter used primarily to intensify surveillance, automate manipulation, generate low-value synthetic content, optimize addiction, replace workers without preserving their future-space, or produce advertising refinements for already-dominant platforms has much, much different one.

You can measure the electricity in the same units, but not the moral field.

This is why efficiency alone cannot settle this issue. A harmful workload run efficiently can still be harmful. An efficient spam factory is not morally redeemed by a low power usage effectiveness score. A water-efficient manipulation engine remains a manipulation engine.

The first question is always what the compute is doing.

The second question is whether that work is worth its burden.

The third question is whether the same opening could be reached with less closure.

Weighting the Community.

A datacenter is usually justified at a large scale, in terms like national competitiveness, economic growth, AI leadership, cloud infrastructure, scientific progress, or technological inevitability.

Except the burden always lands locally.

A particular community always gets the building. A particular utility always gets the load.

A particular watershed always gets the cooling demand. A particular road always gets the construction traffic.

A particular set of residents always gets the noise, backup generators, land conversion, and rate implications.

A particular region may find its future energy planning has been reorganized around facilities whose primary benefits then leave the area.

That still does not automatically make the datacenter unjustified, but it does mean local loci must not be treated some sort of empty hosting substrate.

A community is not actually morally answered at all by being told that "the nation needs AI". The question is always about what happens to that community’s own reachable future.

Does the project lower or raise local resistance?

Does it improve infrastructure for residents, or absorb capacity they need?

Does it bring stable benefits, or mainly temporary construction activity?

Does it increase water stress?

Does it shift utility costs?

Does it make the region more resilient, or more dependent on one corporate infrastructure path?

A datacenter can be a good neighbor only if it is actually a good neighbor in the field, not just in the brochure.

Weighting the Grid.

The grid is not an infinite background, like Deleuze's virtual.

It is always an extant system with constraints. It has generation, transmission, distribution, storage, maintenance, load balancing, reliability requirements, weather exposure, regulatory structure, and long planning cycles.

A large AI datacenter does not just “use electricity.” It becomes a major part of the local grid’s future, which can be harmful or helpful.

A datacenter that demands constant high power from an already strained grid may raise resistance for other users.

It may force new fossil generation, delay decarbonization, increase rates, or crowd out other electrification goals.

It may make housing, industry, transportation, or heating transitions harder.

But it is also true that a datacenter designed with flexibility, storage, clean power, demand response, and local grid support may become less harmful.

It may also help finance new generation, and stabilize certain loads.

It may use curtailed renewable energy, or locate itself where power is abundant rather than in the places where subsidies are easiest.

It may also pay for the infrastructure it requires instead of socializing the burden.

A Better path requires forcing the second form of datacenter where possible and rejecting the first where it hides its damage.

Weighting the Water.

Water in this context is one of the clearest examples of burden transfer.

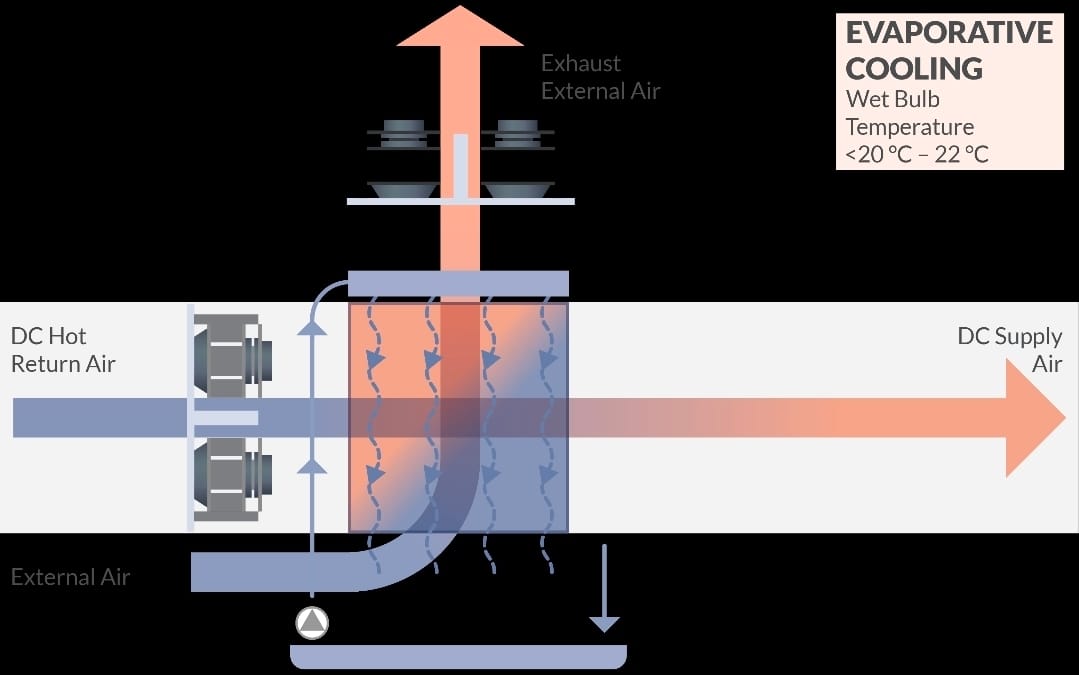

Cooling requires making choices. Air cooling, evaporative cooling, liquid cooling, direct-to-chip cooling, immersion cooling, chilled water systems, dry coolers, hybrid systems, and location-based design all distribute the burdens differently.

A facility can reduce electricity use by using water more aggressively, or it can reduce direct water use by using more electricity.

A facility can use recycled or non-potable water, or be located in a water-stressed region and become a local problem even if its global water number looks small.

A facility can report direct water use while leaving its indirect water use through power generation less visible.

The question is always where the burden goes.

If a datacenter saves electricity by consuming scarce local water, the field has not necessarily improved. If it saves water by increasing power demand from a fossil-heavy grid, the field has also not necessarily improved.

If it claims water positivity through replenishment projects disconnected from the stressed watershed actually hosting the facility, then the field may be improved much less than that language suggests.

Weighting Institutional Concentration.

Datacenters also change institutional power.

Advanced AI infrastructure is expensive. The larger and denser the facilities become, the more the field favors companies that can buy chips, secure power, sign long-term energy deals, negotiate tax incentives, absorb regulatory delay, and build at hyperscale.

AI is becoming less like a product category and more like a layer of epistemic, economic, and administrative infrastructure.

The institutions that control compute may increasingly control who can train models, who can deploy them, who gets access, who pays, who is monitored, who is priced out, and which forms of intelligence become normalized. Compute concentration narrows some futures while opening others.

It may give individuals powerful tools, but also may make those individuals dependent on a few private gateways. It may let small teams do more, and make entire professions subordinate to opaque platforms.

It may help public institutions, while also making public institutions reliant on private infrastructure they do not understand or control.

A datacenter is thus best described as a power relation made of concrete, chips, contracts, and lightning.

Bad Ethics.

There are two bad arguments that should be rejected immediately.

The first is that datacenters are bad because they consume energy.

That is way too simple. A hospital consumes energy. A school consumes energy. A water treatment plant consumes energy. Consumption of energy is not automatically wrong.

The question is whether the consumption preserves or opens weighted future-space without avoidable burden transfer.

The second bad argument here is that datacenters are good because they support innovation.

That is also too simple. Innovation is not some moral solvent. It does not dissolve water stress, emissions, grid strain, labor displacement, surveillance, local opposition, or degraded information environments.

A thing can be new, profitable, impressive, and still structurally harmful. The field does not actually care whether the harm was caused by something exciting to you.

A serious analysis always asks what the datacenter makes more reachable and what it makes less reachable.

Better.

The most important first step towards the Better path is transparency.

A datacenter should clearly disclose enough about energy use, water use, cooling method, backup power, emissions profile, grid impact, and workload category for the public field to even be able to evaluate what is happening.

Proprietary secrecy cannot be allowed to swallow the public burden. A community can never consent to an infrastructure project it cannot actually understand.

Second, datacenters should obviously pay for the burdens they impose.

If a facility requires grid upgrades, those costs should not quietly move onto ordinary ratepayers while the company receives the compute. If it requires new generation, that generation should be additional, clean where possible, and actually physically relevant rather than some neat accounting maneuver.

If it increases local water stress, it should not be approved just because the global corporate sustainability page remains attractive.

Third, location is a moral choice and should be treated as such.

A datacenter should be built where power, cooling, land, transmission, water, and community conditions make sense, not wherever tax incentives and political weakness make approval easiest.

Building in a water-stressed region is not the same as building where cooling can be achieved with lower burden. Building on a constrained grid is not the same as building near abundant clean power.

Fourth, demand should be disciplined.

Not all compute deserves the same priority. A civilization should clearly be willing to spend energy on medicine, science, accessibility, education, public infrastructure, and genuine productivity before they are spending it on synthetic deception, addictive engagement, or marginal advertising optimization.

Fifth, AI systems should be made more efficient at the model and software level, not only at the facility level. If demand expands faster than efficiency improves, efficiency becomes a permission structure for more consumption rather than any reduction in harm.

Sixth, datacenters should be designed first as potential grid assets rather than passive loads where possible. This includes storage, flexible operation, demand response, waste heat reuse where practical, and coordination with local energy planning.

Seventh, communities should have real standing. That does not mean symbolic consultation. That means the real ability to examine, contest, shape, benefit from, or reject projects that alter their local field.

These suggested reforms are the difference between compute that opens the field and compute that moves the closure in circles.

The Ruling.

People should not oppose datacenters as a category.

People should oppose irresponsible datacenter expansion.

That means opposing projects that hide energy demand, obscure water use, externalize grid costs, rely on fossil lock-in, weaken local resilience, serve low-value or harmful workloads, or use “AI innovation” as a blank check against public scrutiny.

But importantly, that also means supporting datacenter development when the field case is strong: when the facility is transparent, appropriately located, clean-powered in a physically meaningful way, water-responsible, grid-supportive, locally accountable, and tied to uses that genuinely widen reachable futures.

The important question is not whether or not the building is, in fact, digital infrastructure.

Datacenters are not evil in themselves, and we do have Better options.